AI Safety and Risk Readiness Report 2026 - Key Insights and Summary

TL;DR

AI Security Landscape in 2026: Risks, Gaps, and Mitigation

AI Adoption and Governance Deficit

AI tools are deployed at 73% of organizations, but real-time security and policy enforcement only reaches 7%. This creates a 66-point structural deficit as AI adoption outpaces security controls. AI Risk and Readiness Report 2026 notes that this gap is widening.

A significant 39% have experienced AI-related data exposure, yet 17% took no action afterward. Over a third report fragmented AI adoption with independent tool deployment and lacking shared security policies. Cybersecurity Insiders highlights that 48% predict governance failures—specifically shadow AI and over-permissive access—will trigger the next major AI-related breach.

The simplest fix is to identify the three highest-risk AI use cases, embed enforceable policies into technical controls, and assign an owner for each. AI Risk and Readiness Report 2026

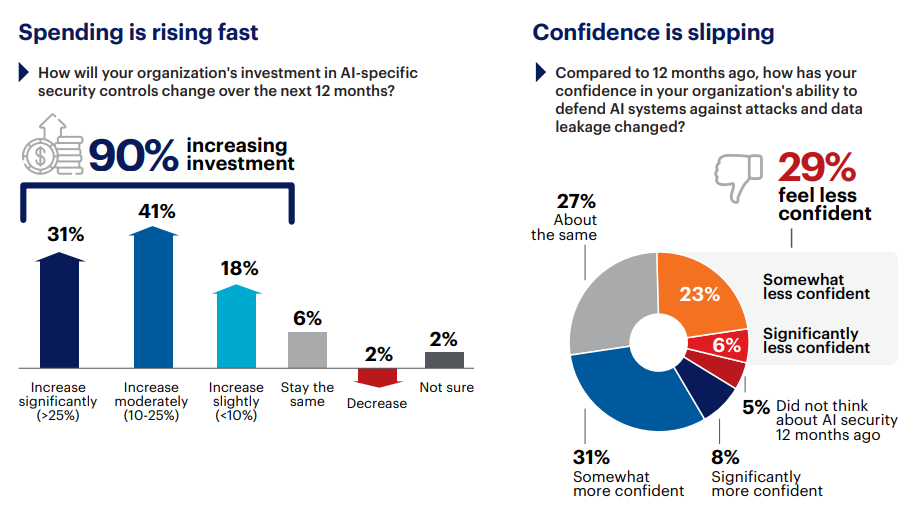

Budget vs. Confidence

90% of organizations increased AI security spending this year, but 29% feel less secure. Cybersecurity Insiders attributes this to business pressure (34%), skill gaps (25%), and legacy tools (21%).

51% rate their technical controls as weak, 50% rate visibility as weak, and 61% rate Non-Human Identity (NHI) governance as weak. Redirecting budget starts with mapping current AI security spend against these weakness ratings. AI Risk and Readiness Report 2026

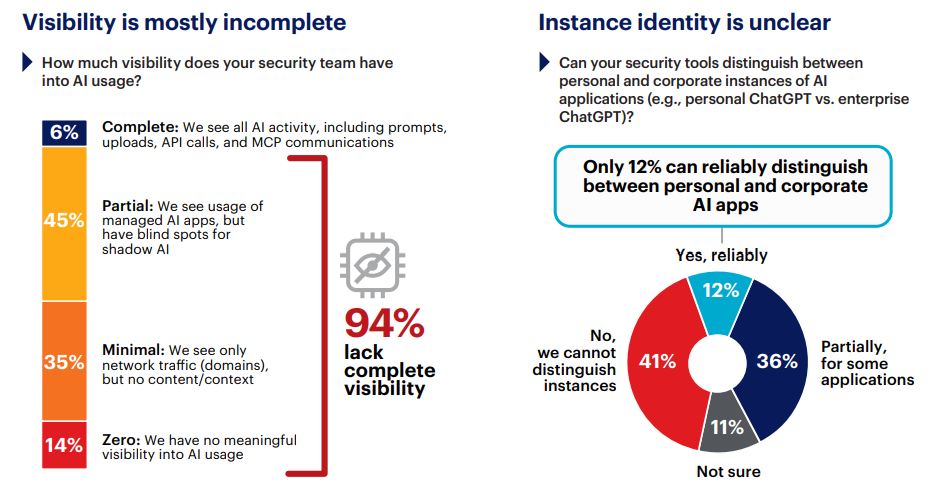

Visibility Gaps in AI Activity

Only 6% of organizations report complete visibility into AI usage. Cybersecurity Insiders states that 45% have partial visibility limited to managed applications, and 35% see only network-level traffic patterns. 14% have no visibility at all. 88% cannot reliably distinguish personal AI accounts from corporate instances, marking the #1 technical blind spot. AI Risk and Readiness Report 2026

Closing this visibility gap means extending activity-level monitoring to SaaS, API, and Machine-to-Machine (M2M) traffic, starting with account-level distinction. AI Risk and Readiness Report 2026

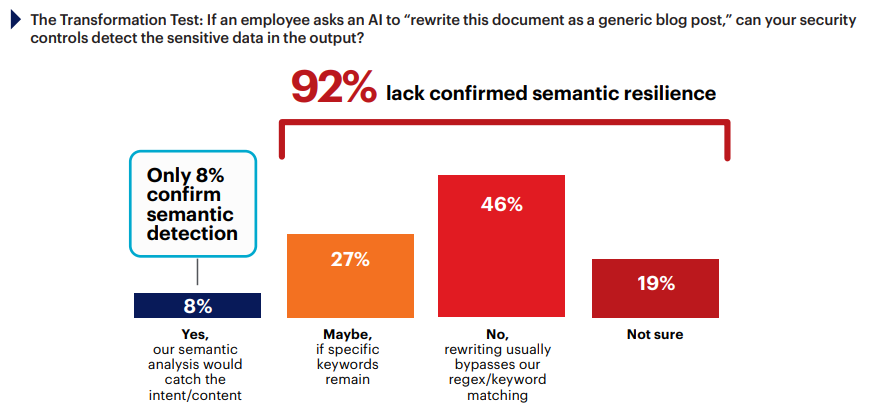

Legacy DLP Limitations

Traditional Data Loss Prevention (DLP) operates at the syntactic layer, matching character sequences against predefined rules. AI Risk and Readiness Report 2026 reports that AI operates at the semantic layer, transforming content while preserving intent. 46% said their controls would miss policy violations because rewriting bypasses regex and keyword matching.

Run a transformation test against your own stack: take a classified document, ask an AI tool to rephrase it, and see if your controls flag the output. Cybersecurity Insiders

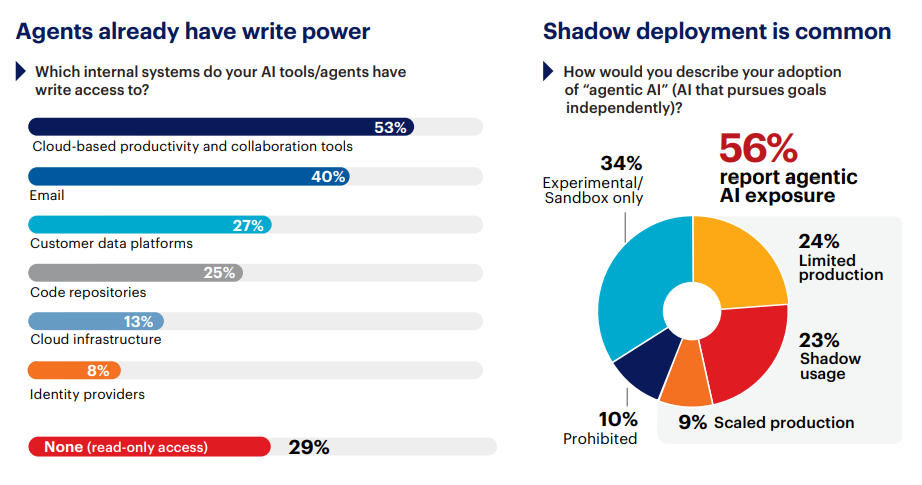

Unsupervised AI Agents

56% report real agentic AI risk exposure: 24% in limited production, 9% at scale handling core business logic, and 23% through shadow deployments. AI Risk and Readiness Report 2026 adds that 32% have zero visibility into agent actions, and 36% are blind to M2M AI traffic. 53% grant AI tools write access to cloud productivity and collaboration suites, and 40% to email.

Audit which AI tools hold write access today and establish approval gates for any action that creates accounts, modifies permissions, or moves data externally. Cybersecurity Insiders

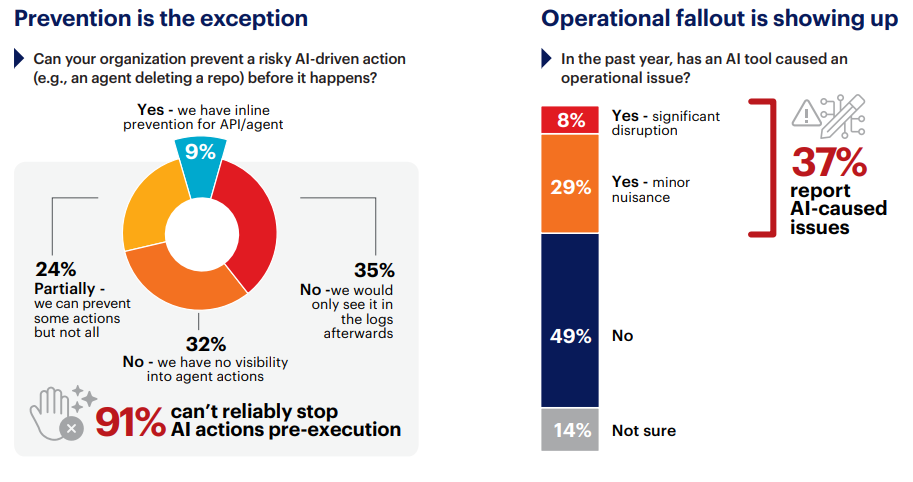

Agent Action Interception

Once an agent initiates a harmful action, only 9% of organizations can intervene before it completes. AI Risk and Readiness Report 2026 details that 37% experienced AI agent-caused operational issues in the past twelve months, with 8% causing outages or data corruption.

Define what anomalous looks like for agent actions, build detection rules for those patterns, and require human-in-the-loop approval for high-risk actions. Cybersecurity Insiders

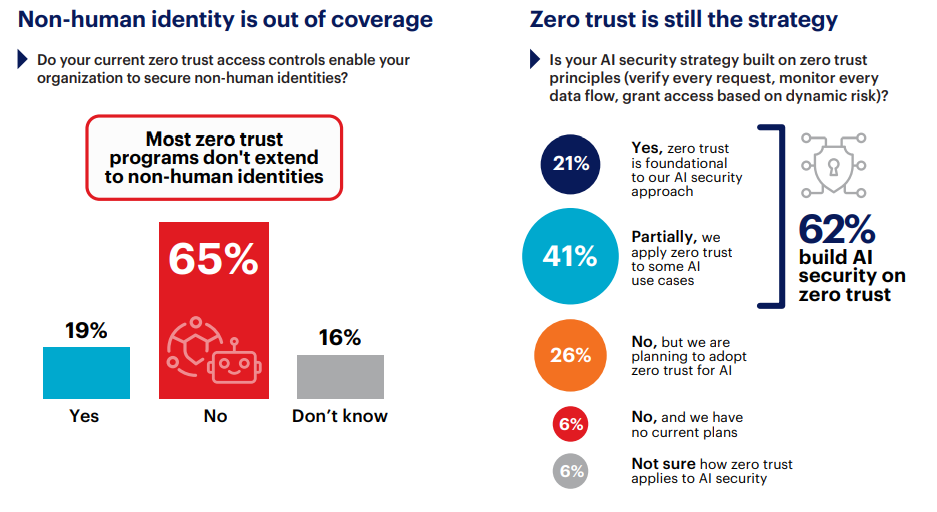

Zero Trust and Non-Human Identities

62% apply zero trust principles to AI security, but 65% say their current zero trust controls cannot secure Non-Human Identities (NHI). AI Risk and Readiness Report 2026 indicates that NHI governance scores lowest across all dimensions, with 61% rating it weak, yet 78% expect NHI growth to outpace human identity growth.

Converge the protocol layer and the identity layer so that agent credentials, scopes, and permissions are evaluated with the same rigor applied to human identities. AI Risk and Readiness Report 2026

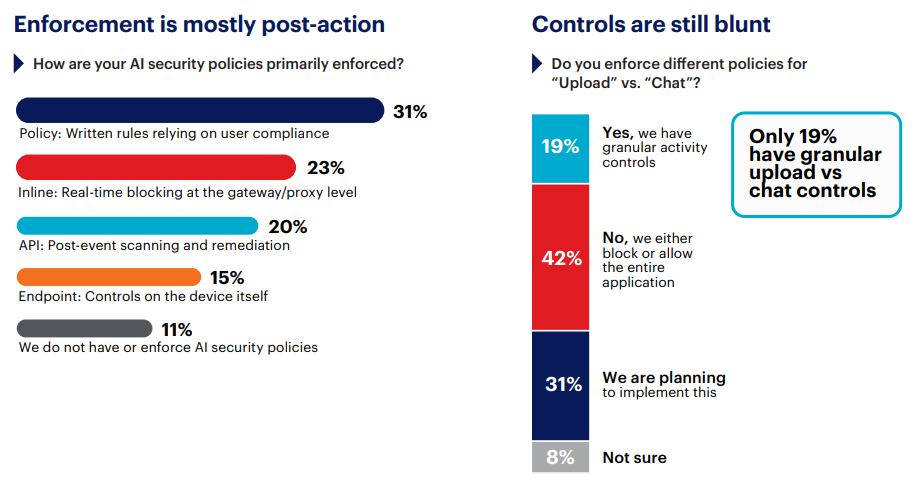

AI Security Enforcement

31% enforce AI security through written policies and employee compliance. Cybersecurity Insiders adds that 20% rely on post-event API scanning, catching violations after the action completes, and inline real-time enforcement is at 23%. 42% control AI applications through binary block-or-allow for entire platforms.

Unify CASB, DLP, and access policy so a single evaluation draws on content classification, user identity, and AI instance type before an action completes. Cybersecurity Insiders

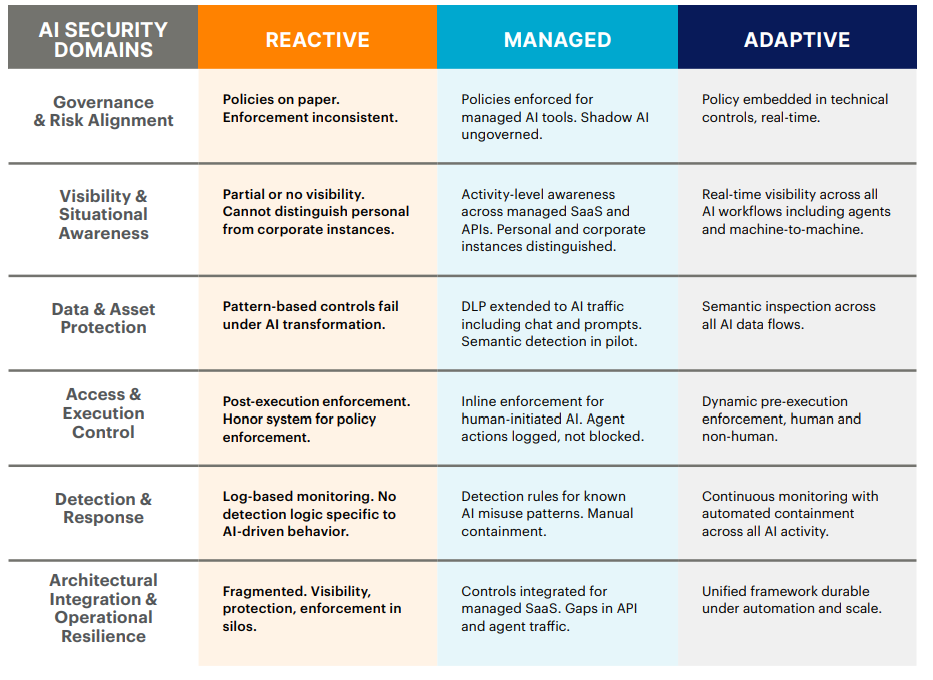

AI Security Maturity Model

The Capability Maturity Model maps six core AI security domains across three tiers of readiness. AI Risk and Readiness Report 2026 indicates that 38% wish their governance had preceded adoption of AI at scale, and 25% wish they had invested in visibility controls sooner.

Key Actions to Close the Execution Gap

- Close AI Visibility Gaps: Expand activity-level monitoring across SaaS, API, and M2M traffic. AI Risk and Readiness Report 2026

- Translate Policy Into Enforceable Guardrails: Embed enforceable policies into technical controls. AI Risk and Readiness Report 2026

- Deploy Semantic Data Protection: Deploy content-aware inspection that evaluates meaning at the point of transfer. AI Risk and Readiness Report 2026

- Enforce Before Execution: Establish approval gates for any action that creates accounts, modifies permissions, or moves data externally. AI Risk and Readiness Report 2026

- Modernize Detection and Containment: Define what anomalous looks like for agent actions and build detection rules. AI Risk and Readiness Report 2026

- Reduce Control Fragmentation: Unify CASB, DLP, and access policy into a single evaluation. AI Risk and Readiness Report 2026

General-Purpose AI Capabilities

General-purpose AI refers to AI models and systems that can perform a variety of tasks rather than being specialized for one specific function or domain. International AI Safety Report 2026 notes that these models are based on deep learning and require substantial computational resources, large datasets, and specialized expertise.

Since the publication of the last Report (January 2025), capability improvements have increasingly come from post-training techniques and extra computational resources at the time of use, rather than from increasing model size alone. International AI Safety Report 2026

Examples of general-purpose AI systems include language systems such as Apertus, Claude-4.5, Command A, and GPT-5.

Risks Associated with General-Purpose AI

General-purpose AI risks fall into three categories: malicious use, malfunctions, and systemic risks. International AI Safety Report 2026

Malicious use includes AI-generated content for scams, fraud, and cyberattacks. International AI Safety Report 2026 notes AI systems can discover software vulnerabilities and write malicious code. Malfunctions include reliability challenges and loss of control scenarios. Systemic risks include labor market impacts and risks to human autonomy.

Managing AI Risks

Managing general-purpose AI risks is difficult due to technical and institutional challenges. International AI Safety Report 2026 states that risk management practices include threat modeling, capability evaluations, and incident reporting. Technical safeguards are improving but still show significant limitations. Open-weight models pose distinct challenges as they cannot be recalled once released and their safeguards are easier to remove.

Ensure your online activities are secure with SquirrelVPN. Explore our In-depth articles, news updates, features on VPN technology, and tips for enhancing online security and privacy. and contact us to learn more about our VPN solutions.